How To Master Digital Soundscapes With AI Music Generator Technology

The modern creative landscape often presents a daunting barrier for those who possess brilliant conceptual ideas but lack formal training in complex digital audio workstations. Many independent creators find themselves trapped in a cycle of frustration, where the inability to compose or produce high-quality soundtracks limits the professional appeal of their video content or personal projects. This technical bottleneck frequently results in missed opportunities and a reliance on generic, overused stock music that fails to capture the unique essence of a specific vision. Fortunately, the emergence of a sophisticated AI Music Generator has fundamentally altered this dynamic, offering a bridge between raw inspiration and studio-grade auditory output.

By leveraging advanced neural networks, writers and content producers can now bypass the traditional steep learning curve of music theory and sound engineering. Integrating a Lyrics to Song AI into the creative workflow allows for the seamless transformation of written stanzas into melodic compositions that feature realistic vocal performances. This shift does not replace human artistry but rather acts as a powerful catalyst for exploration, enabling users to test different genres and moods within seconds rather than days. In my testing, the ability to rapidly iterate through various sonic textures has proven to be an invaluable asset for refining the emotional impact of a project before committing to a final version.

Strategic Implementation Of Artificial Intelligence In Professional Music Production

While some may view automated composition as a simplified tool, the underlying architecture of ToMusic AI reveals a complex system designed for high-fidelity output. The platform utilizes a series of distinct models, ranging from V1 to V4, each engineered to handle different levels of harmonic complexity and vocal nuance. Professional creators often find that these models provide a solid foundation for layering, where the AI-generated stems serve as the primary rhythmic or melodic core of a larger arrangement. This hybrid approach maintains the speed of automation while preserving the granular control required for high-end commercial projects.

The industry has seen a significant shift toward “democratized production,” where the cost of entry for creating a broadcast-quality song has plummeted. Previously, securing a professional vocalist and an experienced producer would require a substantial budget and significant logistical coordination. Today, the ability to generate royalty-free tracks with high-quality instruments and vocals directly from a browser interface has empowered a new generation of digital nomads and small-scale agencies. In my observations, the stability of the newer V4 models provides a level of consistency in vocal timber and rhythmic alignment that was previously unattainable in earlier iterations of generative sound technology.

Navigating The Transition From Textual Concepts To Auditory Reality

The transition from a text prompt to a full musical arrangement involves a sophisticated interpretative process. When a user inputs a description, the AI must decode not just the literal words but the implied mood, tempo, and genre-specific tropes. For instance, a prompt describing a “late-night jazz atmosphere” requires the system to understand the specific syncopation and instrumentation associated with that style. The ToMusic AI platform excels in this interpretive layer, allowing users to specify parameters through natural language that would traditionally require a deep knowledge of musicology.

Optimizing Vocal Realism And Harmonic Balance In Generated Tracks

One of the most challenging aspects of automated music has always been the reproduction of the human voice. Older systems often produced robotic or “uncanny valley” results that lacked emotional resonance. However, the current iteration of ToMusic V4 demonstrates a marked improvement in breath control and pitch inflection. While it remains true that the results are highly dependent on the quality of the initial prompt, the ability to generate realistic singing voices from lyrics has opened doors for songwriters to demo their work with professional-sounding vocals almost instantaneously.

| Production Aspect | Traditional Studio Method | ToMusic AI Workflow |

| Time Investment | Days to Weeks | Seconds to Minutes |

| Technical Skills | Advanced DAW & Theory | Natural Language Input |

| Cost Efficiency | High (Equipment & Labor) | Low (Subscription Based) |

| Iteration Speed | Slow and Costly | Rapid and Unlimited |

| Vocal Availability | Requires Session Singers | Integrated AI Vocalists |

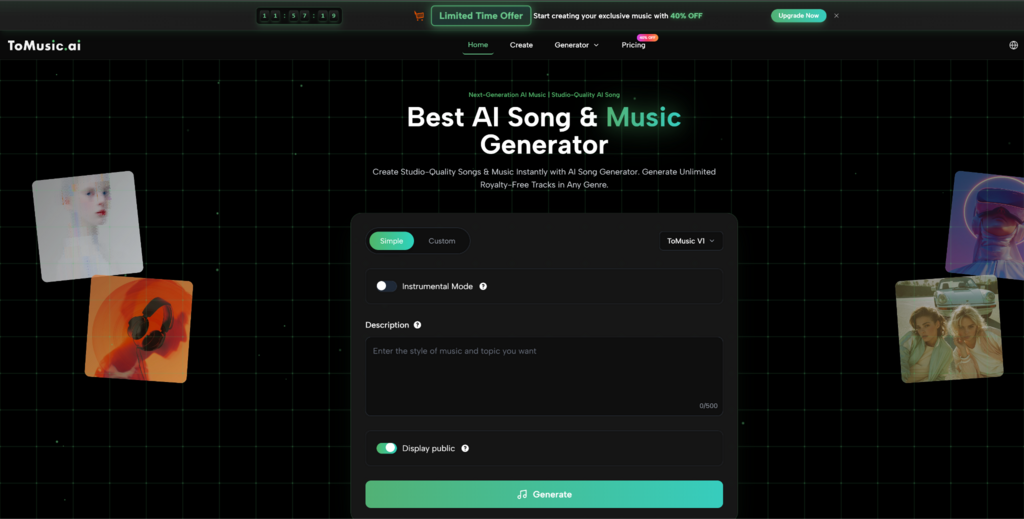

Practical Steps To Generate High Fidelity Music Online

- Input Your Creative Content: Begin by entering your song description or full lyrics into the generator interface. You can provide up to 500 characters of descriptive text to guide the AI on the desired mood and style.

- Select Your Performance Mode: Choose between Custom Mode for detailed control or Simple Mode for a quicker process. At this stage, you can also toggle the Instrumental Mode if you only require background music without vocals.

- Model Selection and Generation: Select the appropriate AI model, such as the high-fidelity V4 for complex songs or V1 for simpler tasks, then click the generate button to receive your polished audio track.

Expanding Creative Horizons Through Specialized Generative Tools

Beyond general song creation, the platform offers specialized tools that cater to niche creative needs. Tools like the Dream Song Generation or Mood Song Generation are specifically tuned to produce soundscapes that align with specific psychological or atmospheric states. This specialization is particularly useful for filmmakers who need to evoke a precise emotion in a scene. While these tools are remarkably consistent, it is important to acknowledge that they are not a “set and forget” solution. Achieving a truly unique sound often requires multiple generations and a keen ear to select the best output, reminding us that the human element of selection and curation remains central to the creative process.

Alexia is the author at Research Snipers covering all technology news including Google, Apple, Android, Xiaomi, Huawei, Samsung News, and More.