Github AI Copilot Makes Serious Security Mistakes

It is by no means about the occasional slip-up. A larger study, the results of which have now been published, showed that the code generated by the autopilot could be classified as problematic in 40 percent of all cases. It would therefore be fatal if programmers simply accept the help offered and not subject it to a more in-depth examination.

The copilot works much more comprehensively than the code completion that has long existed in many development environments, in which only names of functions and values and other smaller elements are completed in order to save typing. The code structure here still comes entirely from the respective programmer.

Too good to be true

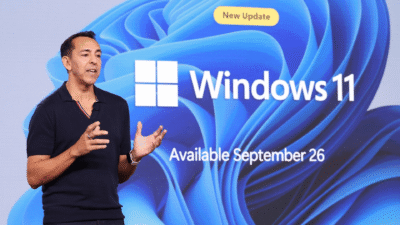

However, Microsoft said that AI systems are already advanced enough to do more. With the copilot GitHub, variable declarations and comments, which are analyzed by the algorithm, are sufficient. From this, the AI then derives which function is required and independently writes the code required for it.

Microsoft is well aware that one shouldn’t expect too much from the automatic system. It is also made explicit that it is not the best idea to simply blindly rely on the generated code. However, the result of the external investigation should come as a surprise to many, since such a high error rate sounds like a rather half-baked feature. Ultimately, however, this is also due to the peculiarities of AI development, which would require an extremely large and good database for training in order to bring correspondingly high-quality results.

Digital marketing enthusiast and industry professional in Digital technologies, Technology News, Mobile phones, software, gadgets with vast experience in the tech industry, I have a keen interest in technology, News breaking.